AI Chatbots for Healthcare: Answering FAQs While Staying Compliant

Healthcare practices are deploying AI chatbots to handle the enormous volume of administrative inquiries they receive daily - while staying on the right side of HIPAA and patient safety obligations.

Every healthcare practice operates with a paradox at its front desk. The staff are among the most trained, credentialed people in any service industry - and they spend the majority of their working hours answering the same dozen questions on repeat. What are your hours? Do you accept my insurance? How do I prepare for my colonoscopy? Where is parking? Can I reschedule my appointment? How do I request a prescription refill?

These are not clinical questions. They do not require a medical background to answer. They require accurate information and fast delivery - and they arrive in volumes that consistently overwhelm even well-staffed practices.

According to the Medical Group Management Association, front desk staff in primary care and specialty practices spend 40-60% of their working hours on repetitive administrative interactions. In multi-provider practices, this translates to the equivalent of one or more full-time employees whose entire output is answering questions that, in principle, a well-designed information system could handle.

AI chatbots, deployed and scoped correctly, can absorb the majority of this volume - reducing staff burden, improving patient response times, and raising satisfaction scores. The operative phrase is "scoped correctly." Healthcare is an industry where the consequences of an AI system operating outside its appropriate boundaries are not just a poor customer experience - they are a patient safety issue and a regulatory liability.

This article examines what AI chatbots can and cannot appropriately handle in healthcare settings, the compliance requirements governing their deployment, and a practical implementation framework for practices evaluating this technology.

The Administrative Inquiry Problem in Healthcare

The volume of administrative inquiries hitting healthcare practices is not a minor inefficiency - it is a structural operational challenge that worsens as patient panels grow and staff turnover increases.

A typical primary care practice with 3-4 physicians and 2,000-3,000 active patients receives an estimated 80-150 inbound calls per day, the majority of which are administrative. A specialty practice with longer appointment preparation requirements - gastroenterology, radiology, oncology - handles even higher ratios of informational calls relative to clinical ones.

The patient experience consequences are direct and measurable. When phone wait times exceed two to three minutes, patient satisfaction scores drop measurably. When after-hours calls reach voicemail for administrative questions that could have been answered digitally, patients experience friction that accumulates into dissatisfaction and, increasingly, switching behavior.

The staff consequences are equally significant. Burnout rates in healthcare administration are high, and repetitive administrative work is a primary driver. The Medical Group Management Association has documented that reducing administrative call burden is one of the highest-impact interventions for reducing front desk turnover - a cost that typically runs $3,000-7,000 per replacement hire when recruiting, training, and lost productivity are accounted for.

What AI Chatbots Can Appropriately Handle in Healthcare

The scope question is the most important design decision in a healthcare AI chatbot deployment. What the chatbot handles, and what it does not, determines both its value and its compliance posture.

The appropriate scope encompasses administrative and informational functions - specifically the categories where accurate, helpful responses do not require clinical judgment and where errors carry limited clinical risk.

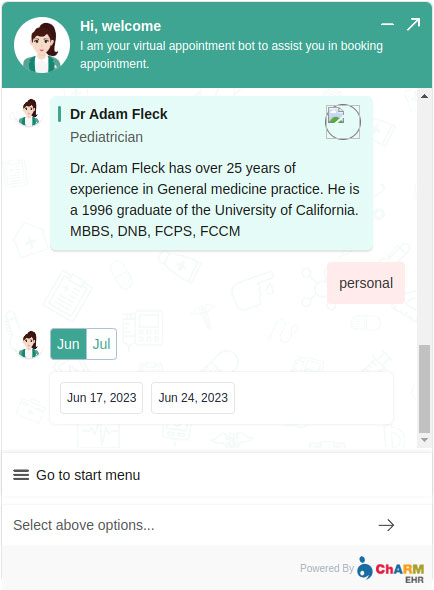

1. Appointment Scheduling and Management

Appointment booking is the highest-ROI chatbot function in most practices. When integrated with the practice management system, the chatbot handles new appointment requests, reschedules, cancellations, and appointment reminders without requiring phone contact. For high-volume practices, this represents a substantial reduction in staff time - and a better patient experience, since patients can book at 11pm on a Sunday rather than waiting for Monday morning.

2. Insurance and Billing Questions

A significant proportion of inbound calls are insurance-related: "Do you accept [insurer]?" "I have a high-deductible plan - will I need to pay at the time of service?" "How does my copay work?" "I received a bill I don't understand." These questions follow predictable patterns and can be answered accurately from the practice's billing policies and insurer list without any PHI exchange.

3. Hours, Locations, Services, and Directions

Basic informational queries are among the highest-volume and easiest to automate: office hours, holiday closures, location addresses, parking instructions, telehealth availability, and the services and specialties offered. A chatbot that answers these questions instantly - at any hour - eliminates a significant portion of inbound call volume with zero compliance risk.

4. Pre-Appointment Preparation Instructions

Preparation instructions for procedures and appointments are a major source of patient confusion and no-show risk. "Don't eat or drink after midnight." "Bring your insurance card and a list of current medications." "Stop taking blood thinners 5 days before the procedure, and confirm this with your prescribing physician." These instructions are standardized, can be pulled from the practice's existing documentation, and are best delivered in writing - which is exactly what a chatbot does.

5. Prescription Refill Request Routing

While prescribing decisions require physician involvement, the process of accepting a refill request and routing it to the appropriate clinical staff member is administrative. A chatbot that collects the patient's name, date of birth, medication, and pharmacy information, then routes that structured request to the appropriate inbox, is performing a documentation and routing function - not a clinical one.

6. After-Hours Informational Guidance

After-hours triage guidance - helping a patient decide whether to go to urgent care or wait until morning - occupies a gray zone that requires careful scoping. Chatbots that provide general, population-level guidance ("symptoms that typically warrant urgent care include high fever, difficulty breathing, severe pain, or any situation you feel is an emergency") are providing information, not clinical advice. The distinction is meaningful: general safety information that directs patients to appropriate resources is appropriate. Individual clinical assessment is not.

7. New Patient Intake Assistance

New patient onboarding involves considerable paperwork and information exchange. A chatbot that guides new patients through intake form completion, explains what to expect at their first visit, confirms insurance information, and answers questions about the practice's procedures reduces staff time at registration and creates a better first impression.

What AI Chatbots Cannot Handle: The Compliance Boundary

The boundary is not arbitrary. It exists because AI language models, regardless of their sophistication, cannot examine a patient, review their full medical history, or take clinical responsibility for their recommendations. Operating across this boundary creates both patient safety risk and regulatory exposure.

Individualized Medical Advice

Questions that ask the chatbot to evaluate a patient's specific symptoms, condition, or situation - "I have a rash that started yesterday, what is it?" "My blood sugar has been high all week, is that dangerous?" "My knee has been hurting since I fell last month, do I need an X-ray?" - require clinical judgment. A chatbot that attempts to answer these questions is practicing medicine without a license, regardless of how its response is framed.

The appropriate response is: "That's a question for your care team. I can help you schedule an appointment, or if you believe this is urgent, I can help you understand your options for urgent or emergency care."

Test Result Interpretation

Patients routinely contact practices to ask about their test results. A chatbot should never interpret, characterize, or evaluate test results in response to a patient inquiry. "Your lab results are in the patient portal and will be reviewed with you by your provider" is the appropriate response, not an interpretation of values the chatbot may have access to.

Prescription Recommendations

Questions about medication - what to take, how much, whether it is safe to combine with another medication - require pharmacological expertise and knowledge of the patient's full medication history. Any chatbot that attempts to answer these questions creates clinical and legal liability regardless of disclaimers.

Mental Health Crisis Response

A patient disclosing suicidal ideation, self-harm, or an acute mental health crisis through a chatbot interface must be immediately directed to the appropriate human resource: a crisis line (988 Suicide and Crisis Lifeline), an emergency service, or a live clinical staff member. This is the highest-stakes escalation scenario in healthcare chatbot design, and it requires a dedicated, tested response path - not a generic "I'll escalate this to our team" message.

HIPAA Compliance Requirements for Healthcare AI Chatbots

The Health Insurance Portability and Accountability Act establishes specific requirements for how protected health information (PHI) - any information that can identify a patient in connection with their health - is handled, stored, and transmitted. These requirements apply to AI chatbot systems that interact with patients or handle information that could constitute PHI.

| Requirement | What It Means for Chatbot Deployment |

|---|---|

| Data encryption in transit | All chatbot communications must use TLS 1.2 or higher |

| PHI storage controls | Conversation logs containing PHI require the same protections as other PHI |

| Business Associate Agreement (BAA) | The chatbot platform is a Business Associate; a BAA is legally required before deployment |

| Access controls | PHI accessible through chatbot integrations requires role-based access controls and audit logging |

| Audit trails | Logs of who accessed what PHI and when are required |

| Minimum necessary standard | Chatbot should collect and transmit only the minimum information needed for the function |

| Breach notification protocols | The platform must have documented breach detection and notification procedures |

The BAA requirement is the most commonly overlooked. Every platform that processes or has access to PHI on behalf of a covered entity must execute a Business Associate Agreement. Deploying a chatbot on a platform that does not offer a BAA, or without executing one, creates a HIPAA violation regardless of what data actually flows through the system.

Practices should evaluate any prospective chatbot platform against these requirements before deployment - not after.

Benchmark Data: What Healthcare Practices Can Expect

The performance data from healthcare AI chatbot deployments across primary care, specialty practices, and health systems is consistent:

- AI chatbots reduce healthcare appointment no-show rates by 26-28% through automated appointment reminders and pre-appointment preparation delivery (MGMA, 2025)

- Healthcare practices using AI chatbots see a 60-70% reduction in administrative call volume for FAQ-type inquiries

- Patient satisfaction scores improve by 20-30% when administrative questions receive instant responses rather than entering a phone queue

- 64% of patients report comfort using AI chatbots for administrative healthcare interactions (MGMA, 2025)

- Projected healthcare cost savings from chatbot automation: $3.6 billion by 2026 (Juniper Research)

- Practices deploying AI chatbots for appointment reminders see no-show rates drop from 15-20% to 8-12% on average

The no-show data is particularly significant for practices operating on tight appointment utilization. A practice with a 15% no-show rate and 100 appointments per week is losing 15 appointment slots per week. Reducing that to 10% recovers 5 slots - a meaningful revenue and capacity impact on an annualized basis.

What to Look for in a Healthcare AI Chatbot Platform

Given the compliance requirements specific to healthcare, the platform evaluation criteria for this sector extend beyond the standard chatbot feature set:

HIPAA-compliant infrastructure: Data centers with appropriate physical security, encryption at rest and in transit, and documented compliance certifications (SOC 2, HITRUST where applicable).

BAA availability: The platform must be willing and able to execute a Business Associate Agreement. If a vendor declines or does not offer a BAA, they are not a viable option for healthcare deployment.

Scope configuration: The platform must allow the practice to define and enforce the boundaries of what the chatbot can address. A chatbot platform that cannot be configured to decline clinical questions - and route them appropriately - is not suitable for healthcare.

Escalation controls: Configurable escalation paths for sensitive queries, including crisis response, that route to the appropriate human resource rather than a generic support queue.

Conversation log controls: Ability to configure retention periods, access controls, and anonymization for conversation logs that may contain PHI.

Integration with practice management systems: Connection to appointment scheduling systems, patient portals, and EHR platforms is what enables the highest-value use cases - appointment booking, intake collection, prescription refill routing.

Implementation Guide: Deploying an AI Chatbot in a Medical Practice

A healthcare chatbot deployment differs from a standard business deployment in its preparation requirements more than its technical complexity. The additional steps are about scoping, compliance verification, and staff training.

Phase 1: Compliance and Scope Definition (Week 1)

- Confirm the platform's HIPAA compliance posture and execute a Business Associate Agreement

- Define the chatbot's approved scope: the specific question categories it will handle, and the specific escalation responses for out-of-scope queries

- Document the escalation paths for clinical questions, billing disputes requiring PHI review, and crisis scenarios

- Identify the practice management system integrations required

Phase 2: Content Development (Week 1-2)

- Compile existing FAQs, preparation instructions, service descriptions, and policy documents

- Develop scope-appropriate responses to the 20-30 most frequent inquiry types

- Create clear, tested escalation messages for clinical questions and crisis scenarios

- Review all content with a licensed clinical staff member to confirm accuracy and appropriate scope

Phase 3: Technical Deployment (Week 2-3)

- Configure the knowledge base with approved content

- Set up integrations with appointment scheduling systems

- Configure lead capture and escalation routing

- Deploy on website with appropriate patient consent language (typically: "This chatbot handles administrative questions only and is not a clinical service")

Phase 4: Staff Training and Testing (Week 3)

- Train front desk and clinical staff on what the chatbot handles, what it escalates, and how to respond to escalated conversations

- Conduct live testing of crisis scenario escalation paths

- Review chatbot responses to clinical questions to confirm appropriate scope handling

Phase 5: Launch and Monitoring (Week 4+)

- Soft launch with monitoring

- Daily review of conversation logs in the first two weeks to identify any scope boundary issues

- Monthly optimization based on unanswered question patterns

The Compliance-Capability Balance

The compliance requirements for healthcare AI deployment are real and specific. But they do not preclude meaningful AI chatbot use - they scope it. A healthcare AI chatbot that operates within its appropriate administrative and informational boundaries is not a compromised version of the technology. It is the technology applied to the problems it is genuinely equipped to solve.

The practices seeing the highest returns from AI chatbot deployment are the ones that defined their scope precisely, built their knowledge base with clinical review, and treated compliance as a design input rather than a constraint imposed after the fact. The result is a system that handles the administrative inquiry volume that was consuming their staff, without creating clinical or regulatory risk.

For practices that spend 40-60% of front desk time on repetitive administrative calls, that is a significant operational return - and one that improves with every month of conversation data that informs knowledge base refinement.

More Articles

5 Industries That Benefit Most from AI-Powered Website Chat

AI chatbots are not equally valuable across all industries. Here are the five sectors where AI-powered website chat delivers the highest measurable ROI, and why.

April 12, 2026

How to Add a Live Chat Widget to Your Website in Under 10 Minutes

A step-by-step guide to installing Paperchat's AI chat widget on any website — no developer required.

March 29, 2026

8 Ways AI Chatbots Reduce Support Ticket Volume

A detailed breakdown of how AI chatbots cut inbound support ticket volume, with current performance benchmarks, real case studies, and practical guidance on implementation.

April 8, 2026