When a business deploys an AI chatbot, there is a reasonable expectation that the bot will know about the business. It should know the pricing, the return policy, how long shipping takes, what the product actually does. But out of the box, a general-purpose AI model knows none of that. It knows the internet. It knows language. What it does not know is anything specific to your company, your products, or your customers.

This is the gap that training closes. And understanding how that training actually works, at a conceptual level, is the most valuable thing a business owner can know before deploying a chatbot. Not because you need to implement it yourself, but because it tells you exactly what to provide, what to expect, and how to keep performance high over time.

Why Generic AI Is Not Enough for Business Use

A large language model trained on publicly available data is extraordinarily capable at general reasoning, language comprehension, and synthesizing broad knowledge. It can discuss philosophy, explain tax law, write code, and summarize medical research. What it cannot do is tell your customer whether their order from last Tuesday has shipped, or explain the specific terms of your enterprise license agreement.

More problematically: it will try. A general AI, when asked a business-specific question it has no accurate knowledge of, does one of two things. It says it does not know, which is unhelpful. Or it generates a plausible-sounding answer based on patterns from similar businesses it encountered during training. This second behavior is called hallucination, and it is the central failure mode for ungrounded AI chatbots.

Consider the practical consequences. A customer asks your chatbot about your return window. The AI, having no access to your actual policy, generates a response based on common industry norms. It says 30 days. Your policy is 14 days. The customer initiates a return on day 22, your team has to explain the discrepancy, and the customer is not satisfied regardless of how politely the situation is handled. Trust is not lost in dramatic failures. It erodes in small, confident inaccuracies.

Training your chatbot on your own content is the mechanism that transforms a general language model into a knowledgeable representative of your specific business. Every accurate answer it gives traces back to content you provided.

The Learning Process in Plain Language

The process by which an AI chatbot learns from your business content follows a consistent pipeline. Each step serves a specific purpose, and understanding what happens at each stage clarifies why content quality matters so much.

Step 1: Content Ingestion

The process begins when you provide your content to the system. That content can take many forms: URLs to your website pages, uploaded documents (PDFs, Word files, text files), or manually entered text. The system retrieves and extracts the raw text from these sources, stripping formatting and leaving the substantive content.

The quality at this stage matters. A well-structured FAQ page yields clean, specific, easily searchable content. A poorly formatted PDF with embedded tables and images may yield garbled or incomplete text. The better the source material, the better the downstream results.

Step 2: Chunking

Raw documents are rarely processed as a whole. A 50-page product manual contains hundreds of distinct facts, policies, and instructions. Storing it as one unit would make it impossible to retrieve precisely. Instead, the system breaks the content into smaller segments, typically called chunks.

Chunk size is a technical parameter that involves trade-offs. Smaller chunks are more precise for retrieval but may lose surrounding context. Larger chunks preserve context but dilute the signal when searching. Well-designed systems use overlapping chunks, meaning adjacent chunks share some content, to avoid severing context at the wrong point.

This is why highly structured content, with clear headings, short paragraphs, and specific answers per section, tends to perform better than dense, unbroken prose. The structure guides the chunking.

Step 3: Embedding

This is the step most unfamiliar to non-technical audiences, and arguably the most important. Each chunk of text is passed through an embedding model, which converts it into a mathematical vector: a list of hundreds or thousands of numbers that encode the meaning of that text.

The critical property of these vectors is that semantically similar texts produce mathematically similar vectors. "How do I cancel my subscription?" and "What is the process for ending my plan?" are different word sequences, but they mean nearly the same thing. Their vectors will be close together in the high-dimensional space the embedding creates.

This is what enables a chatbot to find relevant content even when the customer does not use the exact words that appear in the documentation. The meaning is encoded numerically, and similarity is measured mathematically.

Step 4: Storage in a Vector Database

The vectors, paired with the original text chunks they represent, are stored in a vector database. This is a specialized database optimized for a single operation: finding the vectors most similar to a given input vector, fast.

Unlike a traditional keyword database that searches for string matches, a vector database searches for meaning matches. It can scan millions of stored vectors and return the most semantically relevant chunks in milliseconds.

Paperchat uses PGVector, a PostgreSQL extension that adds vector storage and similarity search capabilities to a standard relational database. This means the business content, its embeddings, and all related metadata live in one system, simplifying management and retrieval.

Step 5: Retrieval

When a user sends a message to the chatbot, the system converts that message into a vector using the same embedding model. It then searches the vector database for the stored chunks with the highest semantic similarity to the question.

The number of chunks retrieved is typically a tunable parameter, often referred to as "top-K." If K is 5, the system returns the 5 most relevant chunks from the entire knowledge base. These become the context that is passed to the language model.

This retrieval step is what "grounds" the response. Instead of asking the language model to answer from general knowledge, the system provides it with the specific, relevant, current content from your knowledge base. The model's job shifts from knowledge recall to synthesis and explanation.

Step 6: Grounded Generation

The language model receives two inputs: the user's question and the retrieved chunks. It then generates a response that draws primarily from those chunks rather than from general training.

The instruction to the model, embedded in its prompt configuration, typically specifies something like: "Answer using only the provided context. If the context does not contain the answer, say so." This constraint is what prevents hallucination on business-specific questions. The model cannot invent a policy that is not in the retrieved chunks, because it has been instructed to restrict itself to that context.

The output is a coherent, natural-language response that synthesizes the relevant content and delivers it in a readable, appropriately toned format.

RAG vs. Fine-Tuning: What Business Owners Need to Know

There are two main approaches to making an AI model knowledgeable about a specific domain. Understanding the difference is important for evaluating any AI chatbot platform.

Fine-tuning involves modifying the model itself. You take a pre-trained language model and continue training it on your specific content. The model's internal parameters are adjusted to encode your business knowledge directly. Think of it as re-educating the model, teaching it new facts at a fundamental level.

Retrieval-Augmented Generation (RAG) keeps the base model unchanged. Instead of teaching the model new facts, it gives the model access to a searchable library at response time. The model consults the library before answering, then generates a response grounded in what it found.

For business applications, RAG has become the dominant approach, and for substantive reasons:

Fine-tuning requires enormous amounts of training data, significant compute cost, and specialized machine learning expertise. It takes days to weeks per iteration. When your content changes, which happens constantly in a real business, the model must be retrained from scratch or with incremental updates. Knowledge does not "update" in fine-tuned models; it requires retraining.

RAG requires no model training at all. Updating the knowledge base is as simple as adding new documents or re-crawling updated URLs. Changes propagate immediately. Cost is orders of magnitude lower. And because the source content is explicitly retrieved and provided to the model, the reasoning is more auditable: you can trace exactly which chunks informed a given response.

There is also a category called keyword matching, which predates both approaches. It searches for literal string matches in a database and returns pre-written responses. It is fast, predictable, and completely unable to handle natural language variation. A question about "sending something back" will not match a keyword database indexed on "return policy."

Chatbot Training Approaches Compared

Score out of 10 across five key dimensions — higher is better for each dimension

Source: Industry analysis synthesized from Anthropic, LangChain, and Gartner research, 2025. "Low Hallucination" and "Low Maintenance" scores are inverted from raw risk ratings so that higher always means better.

| Approach | Accuracy | Update Ease | Hallucination Risk | Cost | Setup Complexity |

|---|---|---|---|---|---|

| Keyword Matching | Low | Moderate | None (returns fixed text or nothing) | Very low | Low |

| Fine-Tuning | High (for trained topics) | Very difficult | Moderate on untrained topics | Very high | Very high |

| RAG | High | Very easy | Low (grounded in retrieved content) | Low to moderate | Moderate |

The conclusion for most business deployments is clear. RAG is faster to deploy, cheaper to maintain, easier to update, and produces more trustworthy responses on the specific domain questions that matter most to your customers. Fine-tuning makes sense for highly specialized models with massive proprietary datasets. For a business chatbot trained on product documentation and support content, RAG is the correct architecture.

What Content to Train On

A well-configured knowledge base is comprehensive about your business's core operations and deliberately excludes content that dilutes relevance. The following categories consistently produce the highest-value training material:

Product and service documentation. Feature descriptions, how things work, what is included, technical specifications. This is where most customer questions originate, and it should be the most thoroughly covered section of the knowledge base.

FAQ pages. If your team has already distilled the most common questions into a structured FAQ, this is some of the highest-quality training material available. The questions map directly to customer intent, and the answers are already written to be understandable.

Policy documents. Return policies, shipping timelines, cancellation terms, privacy policy summaries, refund procedures. Customers ask about policies frequently, especially in post-purchase support. Wrong answers here cause immediate trust damage.

Pricing and plan information. What each tier includes, what is excluded, how billing works, whether there is a free trial. Pricing questions are among the most common pre-purchase inquiries. Accuracy here directly affects conversion.

Support and troubleshooting guides. Step-by-step instructions for common issues. How to reset a password, how to connect an integration, what to do if something does not work as expected.

Case studies and testimonials. These are valuable for pre-purchase conversations. Customers evaluating your product benefit from seeing concrete examples of results achieved by similar businesses.

Company and contact information. Business hours, how to reach a human, physical locations if applicable, response time expectations.

What to exclude is equally important. Historical blog content from several years ago may reference deprecated features or old pricing. Internal documentation not relevant to customer questions adds noise. Marketing copy that describes what the product "can" do in aspirational terms, without concrete specifics, tends to produce vague answers.

What Happens When Content Is Missing or Outdated

The two most dangerous states for a business chatbot are operating with outdated content and operating with gaps in coverage. Both lead to the same outcome: the chatbot generates responses that are either wrong or insufficiently grounded.

Outdated content produces confident wrong answers. This is the worst failure mode because the chatbot's tone does not signal uncertainty. It answers with the same certainty whether it is drawing from accurate current content or stale content from a previous pricing structure. The customer has no reason to doubt the answer. The error compounds when the customer makes decisions based on that answer.

Content gaps produce either unhelpful deflections ("I don't have information about that") or hallucinated responses where the model fills the gap with plausible inference. The latter is more dangerous and less visible.

How to detect problems early. The most reliable signal is the escalation and low-confidence log. Questions that routinely escalate to human agents despite being within the chatbot's intended scope indicate either missing content or poorly phrased source material. A weekly review of these logs in the first 90 days of operation will surface the majority of gaps.

A secondary signal is explicit customer feedback. If your chat widget has a simple thumbs-down mechanism, negative ratings clustered around specific topics indicate training problems.

Prevention is simpler than correction. The operational discipline is linking content updates to the chatbot update workflow. When pricing changes, the knowledge base updates. When a policy is revised, the old version is replaced. When a new product launches, its documentation is added before the chatbot goes live on product pages.

Platforms like Paperchat support URL-based training, which means a scheduled re-crawl of your website content can keep the knowledge base aligned with what is currently published without requiring manual re-uploads every time something changes.

The Update Cycle: How Often to Retrain

A chatbot's knowledge base is not a static artifact. It is a living operational asset that requires the same discipline applied to any product documentation.

Event-based updates should be immediate and non-negotiable. Any change to pricing, policies, core product features, or service availability should trigger an update to the knowledge base on the same day. Allowing even a two-week lag between a business change and a knowledge base update creates a window during which the chatbot is actively providing wrong information.

Scheduled reviews should happen monthly at minimum. This involves auditing the full knowledge base against current published documentation, removing content that is no longer accurate, and adding coverage for topics that have generated questions the chatbot could not handle well.

Launch-triggered additions occur when new products, features, or services go live. Before announcement and promotion, the relevant training content should already be in the knowledge base. New products generate new questions immediately; having the answers ready before the traffic arrives is straightforward to operationalize.

Annual audits are valuable for identifying scope creep in either direction. Knowledge bases that have grown over time may contain contradictory content from different periods. Annual audits clean up that technical debt and ensure the knowledge base reflects the current business rather than the cumulative history of it.

Quality Signals: Measuring Training Effectiveness

Training quality can be measured objectively. The most practical method for business operators is the structured accuracy test.

The 20-question accuracy test is the standard benchmark. Before a chatbot goes live (and at regular intervals after deployment), write 20 questions that represent the full scope of what the chatbot should be able to answer. Include questions about pricing, policies, product features, troubleshooting, and company information. For each question, evaluate whether the chatbot's response is accurate, complete, and appropriately toned.

Industry benchmarks for chatbot accuracy against a domain-specific knowledge base are well established:

- Below 70%: The knowledge base has serious gaps or outdated content. Not ready for production.

- 70-80%: Adequate for low-stakes informational use, but requires active monitoring and rapid iteration.

- 80-90%: Acceptable for production deployment with a clear escalation path for gaps.

- 90%+: Well-trained, suitable for high-volume deployment; the chatbot is genuinely reducing human support burden.

These thresholds correspond to real operational outcomes. A chatbot at 65% accuracy is actively damaging trust more often than it is building it. A chatbot at 90% accuracy is handling the vast majority of questions correctly and routing edge cases to humans appropriately.

Beyond the benchmark test, track these signals continuously in production:

- Deflection rate: The percentage of conversations resolved by the chatbot without human intervention. Well-trained bots typically achieve 60-85% deflection on topics they are trained on (Gartner, 2025).

- Escalation rate: The inverse of deflection. Rising escalation rates signal either knowledge gaps or increasing question complexity.

- CSAT on bot-handled conversations: Post-chat satisfaction scores on conversations that were fully handled by the AI. This is a direct measure of response quality from the customer's perspective.

- Average conversation turns to resolution: More turns can indicate the chatbot is not answering questions clearly or completely the first time.

How Paperchat Implements This

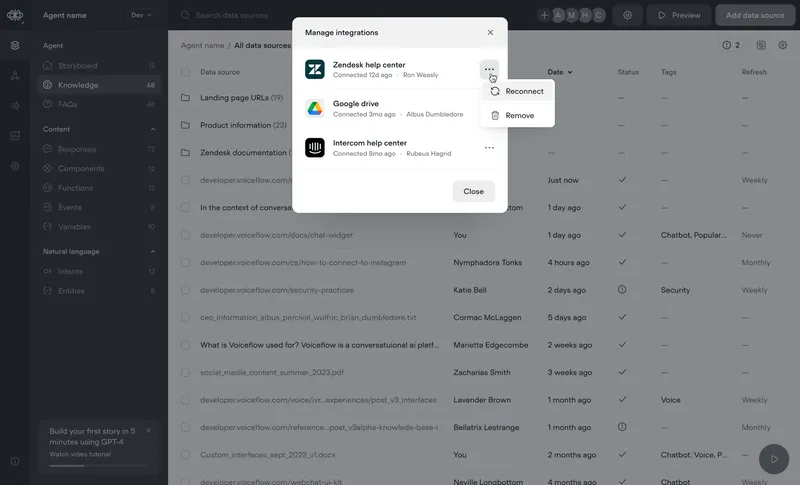

Paperchat is built on the RAG architecture described in this article. When you train a chatbot in Paperchat, you can provide content from three sources: website URLs (which Paperchat crawls and indexes), uploaded files (PDFs, Word documents, plain text), and directly entered text or Q&A pairs.

That content is chunked, embedded using OpenAI's embedding models, and stored in PGVector. When a user asks a question, the system runs a semantic similarity search, retrieves the most relevant chunks, and passes them to the language model as grounding context.

The knowledge base is fully updateable at any time from the dashboard. You can re-crawl URLs, replace documents, add new content, or remove outdated material. Changes are reflected immediately in chatbot responses. There is no retraining delay, no model fine-tuning required, and no engineering work involved.

This is the architecture that makes business chatbots practical: update your content the same way you update your website, and your chatbot stays current.

The Practical Takeaway

The question business owners most often ask about AI chatbots is: "How do I know it will give accurate answers?" The answer is almost entirely determined by training quality. Not the underlying model (most production platforms use capable, current models). Not the chat interface. Not even the configuration. The knowledge base is the variable that most directly drives response accuracy.

Understanding the pipeline — ingestion, chunking, embedding, storage, retrieval, grounded generation — is not technical curiosity. It is operational knowledge. It tells you what good content looks like, why updates matter, and how to measure whether the system is performing at the level your business needs.

A chatbot trained on current, specific, well-structured content will give answers your customers can rely on. That reliability is what turns a chat widget into a genuine business asset.

More Articles

How to Train an AI Chatbot on Your Own Business Data

Learn how to feed your website, documents, and FAQs into Paperchat so your AI chatbot answers like an expert on your business.

March 29, 2026

7 Mistakes to Avoid When Training Your AI Chatbot

The difference between a chatbot that builds trust and one that frustrates customers often comes down to training quality. Here are the seven most common mistakes, and how to fix them.

April 12, 2026

How to Sync Your Website Content with Your AI Chatbot Automatically

Keep your Paperchat knowledge base up to date with your website without manual updates — using scheduled crawls, webhooks, and CMS integrations.

March 29, 2026