How Real-Time Conversation Analytics Help You Improve Customer Experience

Conversation analytics transforms every chatbot interaction into structured insight - here is how businesses use that data to close knowledge gaps, reduce escalations, and continuously improve customer experience.

Every business with a customer-facing function has a version of the same problem: they know that customers are frustrated, that certain processes are confusing, and that support volume is higher than it should be - but they do not know specifically why. Phone support calls are rarely analyzed at scale. Support email threads are tracked by volume but not by content. Live chat logs exist but are almost never systematically reviewed. The result is a persistent gap between the signals customers are generating and the intelligence the business actually uses to make decisions.

AI chatbots close that gap. Not primarily because they answer questions - though they do that well - but because every conversation is a structured data event. Every question asked, every response generated, every escalation triggered, every conversation abandoned at a particular point: all of it is logged, searchable, and analyzable at a scale that no other customer interaction channel provides. For businesses willing to look at that data, it is the most granular customer intelligence source available - and it is being produced continuously, at no additional cost, by the chat system already running on the website.

The Data Gap in Customer Intelligence

Consider the information asymmetry in a typical support environment. A business's phone support team handles 200 calls per day. Of those, roughly 12% are logged with structured notes - most are too brief or the agents too busy to capture anything beyond a resolution code. Email support generates more documentation but is categorized at a coarse level: "billing question," "technical issue," "general inquiry." The actual content of the question - the specific confusion, the exact wording, the policy that was unclear - is almost never preserved or analyzed.

This data problem is not new. Customer experience researchers have noted for years that organizations consistently underestimate the information available in customer interactions and consistently fail to extract it systematically (Forrester, 2023). The challenge has historically been one of volume and structure: human conversations are high-volume and unstructured, making systematic analysis expensive and inconsistent.

AI chatbot conversations are different. They are digital from the start. Every message is timestamped, categorized (by intent detection or manual tagging), and linked to a session that includes the visitor's entry point, the pages they viewed, and whether the conversation resulted in a resolution, an escalation, or an abandonment. The structural differences are significant:

| Channel | Data Available | Systematically Analyzed? | Scalable Analysis? |

|---|---|---|---|

| Phone support | Low (unless recorded + transcribed) | Rarely | Expensive |

| Support email | Medium (text body retained) | Sometimes | Partially |

| Traditional live chat | High (full transcripts) | Rarely | Possible but labor-intensive |

| AI chatbot | Very high (transcripts + intent + resolution + session data) | Can be automated | Yes |

The practical implication is that an AI chatbot deployment, properly configured and reviewed, produces more actionable customer intelligence per month than most businesses collect in a year from other channels combined.

What Conversation Analytics Actually Reveals

The value of chatbot analytics is not in dashboards showing high-level volume metrics. It is in the specific, operational intelligence that conversation data surfaces when examined with the right questions.

Question Volume by Category

The most basic but consistently surprising finding from chatbot analytics is the distribution of question types. Most businesses have assumptions about what their customers most commonly ask - these assumptions are frequently wrong. A professional services firm that believes its primary support burden is scheduling and billing may discover, when looking at actual chatbot logs, that the highest-volume category is questions about a specific service feature that the website describes poorly. An e-commerce store assuming that shipping questions dominate may find that return policy questions significantly outpace them.

The gap between assumption and reality has direct operational consequences. If a business is training its support team and building its FAQ infrastructure based on assumed question distribution rather than actual data, it is investing in the wrong content and leaving actual customer confusion unaddressed.

Chatbot analytics makes the actual distribution visible. Category data from conversation logs - particularly for the top 10-15 question types by volume - is typically more accurate than customer surveys, support team intuition, or website analytics alone.

Unanswered and Low-Confidence Questions

One of the highest-value outputs of conversation analytics is the set of questions the chatbot could not answer confidently. These are knowledge base gaps - and they are an exact map of where customers need information that the business has not yet provided.

When a chatbot frequently responds with uncertainty or falls back to escalation on a particular question type, that is not a chatbot failure. It is a signal that the knowledge base requires a specific addition. The unanswered question log is, in effect, a prioritized content development roadmap built entirely from real customer data.

Businesses that act systematically on this data - adding content to address the top unanswered question categories monthly - see consistent improvement in resolution rates and reduction in escalation frequency. Research from Gartner (2024) found that organizations using structured conversation analytics to drive knowledge base updates achieve 40-60% reduction in chatbot non-resolution responses within the first six months of implementation.

Resolution Rate by Query Type

Not all question types resolve at equal rates. Some queries - transactional questions about order status, policy questions with clear answers, basic how-to questions - resolve at very high rates through AI responses. Others - complaints, complex technical issues, high-value purchase decisions - escalate to human agents more frequently.

Tracking resolution rate by query type reveals which categories the AI is handling well and which require either knowledge base improvement or routing adjustment. A question type with a 30% resolution rate that is generating 200 conversations per month represents 140 escalations that might be avoidable with better content - or an indication that the escalation is appropriate and the routing should be made more efficient.

Peak Volume Patterns

When are your customers reaching out? The answer may be obvious during business hours, but the full picture usually is not. AI chatbot data shows demand curves with hourly granularity across every day of the week.

For most businesses, two findings emerge from this analysis: first, a significant proportion of customer interactions occur outside staffing hours (commonly 30-45% of total daily volume for businesses serving consumers); and second, within business hours, peak demand concentrates in narrow windows that may not be matched by current staffing patterns.

This data has immediate operational value for planning human agent coverage. Rather than staffing based on historical assumptions or general industry patterns, teams can align coverage directly with the periods when actual customer demand is highest and when AI escalations are most likely.

Sentiment Trends Over Time

Aggregate sentiment analysis across chatbot conversations shows whether the customer experience is improving or deteriorating over time. If sentiment scores on conversations about the checkout process begin declining over a three-week window, that is an early warning signal of a problem - before it shows up in churn data, review volume, or social media.

This early-warning function is particularly valuable because customer experience problems typically develop gradually. A deteriorating process creates increasing frustration, but that frustration only becomes visible to the business through lagging indicators - reduced repeat purchase rates, higher return volumes, negative reviews - weeks or months after the problem first manifested. Conversation sentiment analysis surfaces the same signal in near real-time.

Drop-Off Points in Conversations

Where are customers abandoning conversations? Drop-off analysis identifies the moments in a chatbot flow where users disengage without resolution. Systematic drop-off at a specific question type, at a particular response length, or at certain times of day indicates a point of friction in the conversational experience.

High drop-off after a specific type of AI response often means the response is not addressing the question accurately enough to satisfy the customer. High drop-off after the third message exchange often indicates frustration - the conversation has not reached a resolution quickly enough.

Real-Time vs. Batch Analytics: Why Both Matter

The distinction between real-time and batch analytics is not just technical - it determines which types of decisions each mode supports.

Real-time analytics provides visibility into what is happening right now: conversation volume in the current hour, escalation rate relative to baseline, average response time, and active conversations by category. This is the mode that enables immediate operational decisions. If escalation rate spikes during a campaign launch, real-time monitoring surfaces that within minutes rather than after the fact. If a new product launch generates an unexpected volume of questions about a specific feature, the team sees that before the end of the first day.

Batch analytics - reviewed weekly, monthly, or quarterly - is where the deeper intelligence lives. Monthly category volume trends show whether a support issue is a one-time event or a structural problem. Quarter-over-quarter resolution rate improvements show whether knowledge base investments are paying off. Cohort analysis of which question types are most common from new visitors versus returning customers reveals different information needs across the customer lifecycle.

The businesses that extract the most value from conversation analytics use both. Real-time monitoring enables in-session response to anomalies. Batch review provides the systematic intelligence that drives training investments, content development, and process improvements.

Five Specific Improvements Analytics Reveals

1. Knowledge Base Gaps

The most direct application of conversation analytics is filling the gaps in chatbot training content. Every unanswered question or low-confidence response is a mapped gap. The gap identification process requires only a periodic review of the low-resolution conversation log - typically 30-60 minutes per month - to identify the top 5-10 categories of questions the chatbot is not adequately addressing.

Adding content to address these categories is the single highest-return investment available in the ongoing management of a deployed chatbot. Analysis across chatbot deployments tracked by customer success teams at major platforms shows that structured monthly gap-filling produces 15-25% additional improvement in deflection rate over set-and-forget deployments within the first 90 days (Intercom, 2024).

The content additions do not need to be elaborate. A concise, accurate answer to the specific question being asked is more valuable than a comprehensive document that addresses the question obliquely. Targeted knowledge base updates driven by actual unanswered question data outperform generic content additions.

2. Policy and Website Clarity Issues

When a specific policy or product attribute generates a consistently high volume of questions, the chatbot's analytics are communicating something important: customers cannot find or understand this information on the website. The chatbot is compensating for a website communication failure.

This is actionable in two directions. In the short term, ensuring the chatbot answers the question accurately provides immediate customer resolution. In the medium term, improving the clarity of the relevant website content - the returns policy page, the shipping FAQ, the product description, the pricing table - reduces the volume of questions that need to be answered at all.

Analytics data quantifies the impact of these website improvements. If a returns policy clarification on the website reduces chatbot questions about returns by 40% in the following month, the analytics provide direct measurement of the improvement.

3. Onboarding Friction Points

For SaaS products and service businesses with structured onboarding sequences, chatbot conversation data maps the friction points in the onboarding journey with precision. If a specific setup step generates high question volume - "How do I connect my [integration]?", "Why isn't [feature] appearing in my account?" - that step is a friction point that documentation, in-product guidance, or onboarding flow redesign could address.

Without chatbot analytics, this friction is often invisible until it shows up in onboarding completion rates or early churn - lagging indicators that take months to accumulate. Chatbot data makes it visible in real time: the question pattern identifies the friction point, and the volume quantifies how many users are hitting it.

4. Product Feature Discovery Failures

A subset of chatbot conversations reveal a specific and costly problem: customers asking how to do something that the product already does, in a way that suggests they do not know the feature exists. "Can I do X?" when X is an existing feature is not a support request - it is a product communication failure. The feature was built, but users are not discovering it.

These conversations, identified through analytics review, map directly to in-product guidance improvements: tooltips, onboarding hints, feature announcement emails, help documentation improvements. Addressing feature discovery gaps reduces the volume of these questions while also increasing feature adoption - which is directly linked to retention outcomes.

5. Human Staffing Alignment

Peak volume patterns from chatbot analytics answer a question that most businesses answer poorly: when do customers need human support, and at what volume? The typical approach - staggering shifts based on historical assumptions - produces chronic coverage mismatches. Teams are overstaffed during low-demand periods and understaffed during peaks.

Chatbot analytics provides the data to align human agent coverage with actual demand. Escalation volume by hour and day of week shows exactly when human agents are most needed. Combining this with AI coverage for off-peak and lower-complexity queries creates a staffing model that is both more efficient and more responsive.

Analytics-Driven Optimization vs. Set-and-Forget Deployment

The performance gap between businesses that actively use conversation analytics and those that deploy a chatbot and leave it unchanged is substantial and well-documented.

| Dimension | Set-and-Forget Deployment | Analytics-Driven Optimization |

|---|---|---|

| Knowledge base quality | Static; degrades over time | Continuously updated; improves |

| Non-resolution rate | Stable or increasing | Declining monthly |

| Escalation rate | Stable or increasing | Declining 1-2% per month |

| Customer satisfaction with chatbot | Flat | Improving quarter-over-quarter |

| Time investment required | Minimal | 2-3 hrs/month |

| Performance at 12 months vs. launch | 80-90% of launch performance | 130-150% of launch performance |

| Knowledge gap identification | None | Systematic, monthly |

The two-to-three hours per month required for analytics review represents the highest-return time investment available in the ongoing management of a chatbot deployment. It converts a static tool into an adaptive one - and the compounding effect of monthly improvements produces meaningful performance differences within 90 days.

Research from Gartner (2024) found that businesses reviewing and acting on chatbot conversation data monthly reduce escalation rates by 1-2 percentage points per month for the first six months post-deployment. Against a baseline escalation rate of 30%, that represents a 50-60% reduction in escalations within six months - substantially reducing human support burden while simultaneously improving customer experience.

The Continuous Improvement Loop

The operational mechanism for analytics-driven chatbot improvement is a structured monthly review cycle. The process is straightforward and does not require specialized analytics expertise.

Month 1: Establish baseline. Deploy the chatbot, configure categories and intent labels, and let the first full month of conversation data accumulate. The objective is a baseline dataset, not optimization. Note total conversation volume, resolution rate, escalation rate, and the top 10 question categories.

Month 2: Identify gaps. Review the unanswered question log. Identify the top 10 categories of questions the chatbot is answering with low confidence or routing to escalation. These are the priority knowledge base additions. Identify which categories have the highest escalation rates - these represent either knowledge gaps or routing decisions that need adjustment.

Months 2-3: Add targeted content. For each of the top gap categories identified, add specific content to the chatbot's knowledge base. This does not require long-form documentation - it requires accurate, specific answers to the questions that are actually being asked. Track the questions added in a simple log with the date of addition.

Month 3 onwards: Measure resolution improvement. For each added content area, track the resolution rate in the month following the addition. Content additions that produce measurable resolution improvement should inform the next round of additions. Content additions that do not improve resolution indicate that the gap is more structural - a product or policy issue rather than a chatbot training issue.

Ongoing: 30-minute monthly review cycle. After the initial two months, the optimization cycle stabilizes into a monthly review: unanswered questions, escalation patterns, sentiment trends, volume distribution. This 30-minute investment produces compound returns as each month's improvements build on the previous month's baseline.

Setting Up an Analytics-Driven Optimization Process

For businesses ready to implement this approach, the practical setup requires three components.

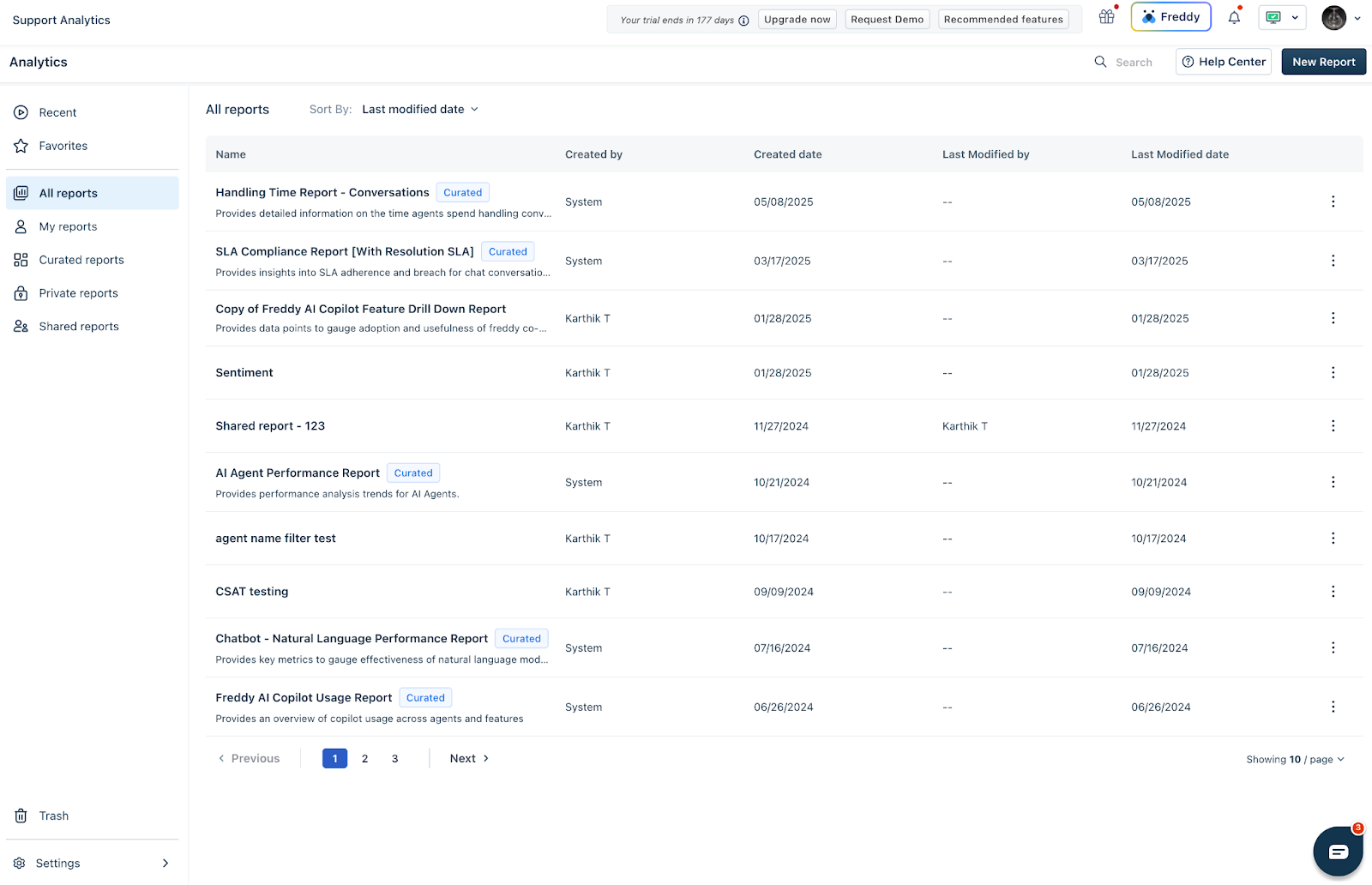

A key metrics dashboard. Configure visibility into: total conversation volume, resolution rate, escalation rate, unanswered question volume, average satisfaction rating (if collected), and volume by category. This dashboard should be reviewable in under five minutes and surface any significant deviation from the previous period's baseline.

Alert configuration. Set thresholds for anomaly detection: escalation rate above 40%, conversation volume 50% above baseline (indicating a product or service issue driving inquiry), and significant decline in satisfaction rating. Alerts ensure that emerging problems surface immediately rather than only during scheduled reviews.

Monthly review process. A structured 30-minute review each month produces the gap analysis, content prioritization, and iteration tracking that drives continuous improvement. The review should produce: a list of content additions made in the past month, measurement of impact from previous additions, and a prioritized list of additions for the coming month.

Platforms like Paperchat provide this analytics infrastructure as part of the core product - conversation logs, category tracking, escalation reporting, and the knowledge base management tools needed to act on what the data reveals. The intelligence is generated automatically. The improvement it drives depends on systematic review and action.

The Compounding Return on Analytics Investment

The most important dimension of conversation analytics is its compounding nature. A chatbot that is reviewed and improved monthly does not stay at its launch-day performance level - it consistently outperforms that baseline as the knowledge base expands, gap content is added, and routing logic is refined.

Within 90 days of systematic analytics-driven optimization, businesses consistently observe what amounts to a performance step-change relative to the set-and-forget deployment curve. The chatbot becomes more accurate, more comprehensive, and more likely to resolve customer questions without escalation - not because the underlying AI has changed, but because the information it draws on has become progressively more complete and accurate.

This compounding dynamic is why businesses that invest 30-60 minutes per month reviewing conversation data consistently outperform those that do not - and why the return on that time investment grows rather than diminishes over the deployment lifetime.

The data is already being generated. The question is whether it is being used.

More Articles

How to Add a Live Chat Widget to Your Website in Under 10 Minutes

A step-by-step guide to installing Paperchat's AI chat widget on any website — no developer required.

March 29, 2026

8 Ways AI Chatbots Reduce Support Ticket Volume

A detailed breakdown of how AI chatbots cut inbound support ticket volume, with current performance benchmarks, real case studies, and practical guidance on implementation.

April 8, 2026

How to Capture More Leads with an AI Chat Widget on Your Website

Turn passive website visitors into qualified leads using Paperchat's AI chat widget — with proactive messaging, lead forms, and CRM sync.

March 29, 2026