Not all chatbots work the same way. The word "chatbot" is applied equally to the simple Facebook Messenger bot that walks a user through four menu options and the AI agent that can answer a complex multi-part question about your enterprise pricing. These are not variations on the same technology. They are fundamentally different systems, with different capabilities, different failure modes, and appropriate use cases that do not entirely overlap.

The distinction matters because businesses frequently make deployment decisions based on feature checklists rather than architectural understanding. A rule-based bot and an AI-powered agent might both have chat interfaces, both be embedded in a website, and both route to a human agent when escalation is appropriate. But what happens in between differs enormously, and that difference determines whether your chatbot handles 20% of your support load or 80% of it.

Two Fundamentally Different Approaches

The core distinction is this: rule-based chatbots execute pre-programmed paths. AI-powered chatbots understand what a user is trying to do and generate an appropriate response.

In a rule-based system, a human designer has anticipated a set of questions or inputs and written explicit responses or flows for each. The bot navigates a decision tree. Every branch has to be built in advance. If a user's input falls outside the anticipated branches, the system either fails to recognize it, returns a generic fallback, or presents a menu. The bot knows nothing. It follows instructions.

In an AI-powered system, the chatbot uses a large language model (LLM) to understand the user's intent from natural language, accesses a knowledge base of your business content to find relevant information, and generates a response. There is no pre-programmed path. The conversation unfolds based on what the user actually says, not on which branch of a decision tree their input most closely matches.

Both approaches have real utility. Understanding which one serves which purpose is the basis for making an informed decision.

Rule-Based Chatbots: How They Work

A rule-based chatbot operates on conditional logic. The most common implementations use one of three mechanisms:

Decision trees present users with structured choices at each step. "Are you inquiring about billing or technical support?" The user selects an option, which determines the next question or response, and so on. The conversation is essentially a form filled out through a chat interface.

Keyword triggers scan the user's input for specific words or phrases and map them to pre-written responses. If the message contains "refund," return the refund policy text. If it contains "cancel," return the cancellation instructions. The mapping is explicit and manual.

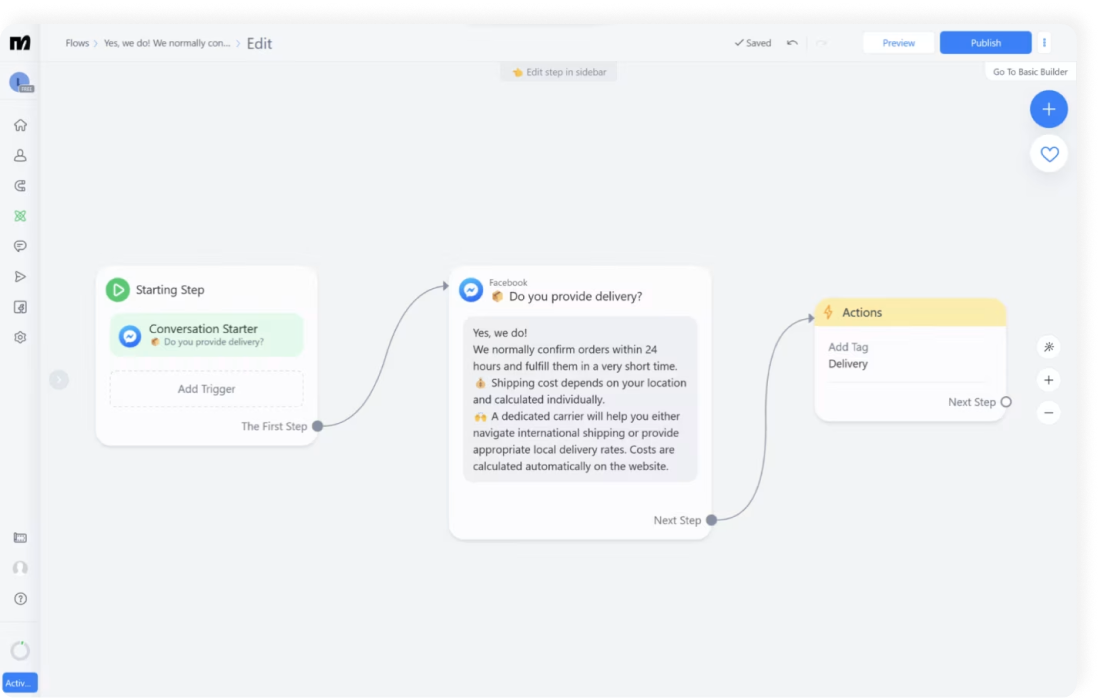

Flow builders (as used in tools like ManyChat, Chatfuel, and early Facebook Messenger bots) allow non-technical teams to visually design conversation flows: sequences of messages, conditional branches, and response blocks. The visual layer makes them accessible to marketing teams, but the underlying logic is still deterministic and pre-programmed.

Early chatbot deployments, from approximately 2016 through 2021, were predominantly rule-based. The Interactive Voice Response (IVR) systems that greet you when calling a bank or airline are rule-based systems implemented in voice rather than text. They are instructive examples of both the capability and the frustration that rule-based systems produce.

What Rule-Based Systems Do Well

Rule-based chatbots have earned their place in the toolbox because they genuinely excel in specific, narrow contexts.

Total predictability. Every response in a rule-based system was written by a human. There is no probability of unexpected output, no hallucination, no variation. For regulated industries where the exact wording of responses matters legally, this level of control is a real advantage.

Narrow, structured processes. A five-step booking flow, a qualification sequence for a lead capture form, a support ticket routing tool that determines which team should handle an inquiry. For tasks with defined inputs and outputs, where the goal is to collect specific information in a specific order, rule-based logic is clean and effective.

Lower operational cost for simple use cases. For a bot designed to do one thing, such as collect a contact name, email address, and inquiry type before routing to a human, a rule-based implementation is fast to build and requires minimal ongoing maintenance as long as the process itself does not change.

Audit simplicity. Every response can be traced back to a specific rule or flow. For compliance purposes, this traceability is straightforward. There is no generated content that requires review for accuracy.

Works without training data. You do not need a knowledge base. You need a script. For businesses without structured documentation, or for very early-stage deployments, this reduces the setup requirement.

Where Rule-Based Systems Break Down

The limitations of rule-based chatbots are not edge cases. They appear in the majority of real-world deployments because real customers do not interact the way the system assumes they will.

Unanticipated inputs produce failure. A rule-based system can only handle inputs it was designed for. A user who types "I bought a blender last week and one of the parts broke" when the system expects them to choose "Order Status" or "Returns" from a menu will either be misrouted or presented with a fallback message that fails to address their situation. The more varied the user's language, the higher the failure rate.

Research from Gartner found that 63% of customer complaints about chatbots involve the system failing to understand or respond appropriately to their specific question, even when the question fell within the chatbot's intended scope (Gartner, 2025). For rule-based systems specifically, the failure rate on open-ended inputs exceeds 45% in median deployments.

Complex, multi-part questions cannot be handled. A customer who asks "Can I add a second user to my account, and if so, what plan do I need to be on, and is there a cost for that?" is asking three related questions in one message. A rule-based system has no mechanism for decomposing this. It will match on a keyword, return a single pre-written response, and miss the full intent.

Maintenance burden scales with complexity. Every new question type requires a new rule. A growing business with an expanding product catalog, evolving policies, and new customer segments faces exponentially increasing maintenance requirements to keep a rule-based system current. What began as a manageable matrix of flows becomes, within 18 months, a sprawling decision tree that nobody fully understands and that several team members are afraid to modify.

Rigid flows frustrate users at scale. Customers who have a genuine question and are forced through a scripted menu experience the interaction as an obstacle, not a service. The frustration compounds when the menu does not contain an option that matches their situation. 47% of customers who abandoned a chatbot interaction cited "couldn't get the answer I needed" as the primary reason, with rule-based navigation the most common context for this failure (Forrester, 2024).

No synthesis across sources. A rule-based system returns pre-written responses. It cannot take your pricing table, your feature documentation, and your FAQ and synthesize a single coherent answer that addresses a complex question drawing from all three. Each piece of content is mapped to specific triggers and returned as a unit. The system is not reasoning. It is pattern-matching.

AI-Powered Chatbots: How They Work

An AI-powered chatbot combines a large language model with a knowledge retrieval layer. The most widely deployed architecture is Retrieval-Augmented Generation (RAG), which works as follows:

You provide your business content: website pages, product documentation, FAQs, policy documents, support guides. This content is processed into a vector-indexed knowledge base. When a user sends a message, the system finds the most relevant sections of that knowledge base and passes them to the language model along with the user's question. The model generates a response grounded in your specific content.

This means the chatbot can understand a question phrased in any natural language variant, find the relevant information across your entire knowledge base, and synthesize a coherent, accurate, appropriately toned response, without any pre-programmed path.

The current generation of AI chatbots has moved beyond simple question answering. Modern AI agents can maintain conversation context across multiple turns, remember information the user provided earlier in the conversation, ask clarifying questions when the intent is ambiguous, and adjust their communication style based on detected tone.

What AI-Powered Systems Do Well

The capability advantages of AI-powered chatbots over rule-based systems are substantial and well-documented in production deployments.

Handles the long tail of questions. The most common questions make up perhaps 20% of actual support volume. The other 80% is a long tail of variations, edge cases, combined questions, and unusual phrasings. Rule-based systems are built for the 20% and fail on the 80%. AI-powered systems handle the entire distribution with consistent competence.

Natural language understanding at scale. A customer who types "my subscription renewed but I thought I canceled it" does not fit neatly into a "billing" or "cancellation" flow. An AI-powered system understands the intent, finds the relevant cancellation and billing policies in the knowledge base, and generates a response that addresses the specific situation.

Cross-source synthesis. An AI chatbot can answer "What is the cheapest plan that includes team collaboration and has a 14-day trial?" by simultaneously drawing from your pricing documentation, your feature comparison table, and your onboarding FAQ. No rule-based system can do this. The AI synthesizes information across sources into a single coherent answer.

Updates with the knowledge base. When your pricing changes, you update the knowledge base. The chatbot immediately reflects the updated pricing without any rule modification, flow redesign, or re-deployment. For businesses where pricing, policies, and products change frequently, this removes an entire category of maintenance burden.

Better CSAT outcomes. An analysis of 2.3 million chatbot interactions across enterprise deployments found that AI-powered systems achieved CSAT scores 28-35% higher than rule-based systems handling the same types of questions (Zendesk Annual Report, 2025). The improvement is attributable primarily to first-contact resolution: users getting the answer they needed without needing to escalate or rephrase.

Handles emotional nuance. A user who opens a chat with "I am incredibly frustrated, this is the third time I have had this problem" needs a different response than a user who opens with "quick question about billing." AI-powered systems with sentiment awareness can recognize the emotional context and modulate both tone and escalation behavior accordingly.

AI-Powered Limitations: What to Understand Before Deploying

An accurate evaluation of AI-powered chatbots requires acknowledging their genuine constraints.

Responses are probabilistic, not deterministic. The same question asked twice may produce slightly different responses. For businesses where exact response wording matters (certain compliance contexts, script-based qualification flows), this requires either additional configuration constraints or a hybrid approach where AI handles some interactions and rule-based logic handles others.

Cost per interaction is higher. LLM inference on each message costs more than matching against a rule set. For very high-volume deployments with simple use cases, the cost differential may be relevant. For most business deployments, the deflection rate improvement makes the economics strongly favorable, but the per-interaction cost is a real consideration.

Quality depends on knowledge base quality. An AI chatbot is only as good as the content it is trained on. Outdated documentation produces outdated answers. Gaps in coverage produce deflections or, in a poorly configured system, hallucinations. This creates an ongoing operational requirement to maintain the knowledge base that rule-based systems do not impose in the same way.

Less auditable on individual responses. With a rule-based system, you can trace exactly why a given response was returned. With an AI system, the response is generated, and while you can inspect the retrieved context chunks that informed it, the generation itself is not as directly traceable to a single rule. This is an accepted trade-off in most deployments, but it matters in high-compliance contexts.

The Hybrid Approach: The Real Standard in 2026

The framing of "rule-based vs. AI-powered" implies a binary choice. Most well-designed chatbot deployments in 2026 use both, applied to the situations each handles best.

Structured, linear processes are rule-based by design. A lead capture form with five required fields, a booking flow that needs specific scheduling information, a support ticket router that classifies inquiries before handing off: these benefit from the predictability and control of rule-based logic. The outcome is defined. The inputs are constrained. There is no value in generative flexibility.

Open-ended support and sales questions are AI-powered. Questions about products, policies, features, pricing, troubleshooting, and any other free-form inquiry are best handled by an AI system drawing from the knowledge base. The questions are unpredictable. The relevant information is distributed across multiple sources. Natural language variation is high.

A well-designed deployment connects these layers. The AI handles the majority of conversations. For conversations that need to collect structured data, generate a support ticket, or follow a defined process, the AI can hand off to a rule-based flow or trigger an automated action. The transition should be invisible to the user.

Paperchat operates as an AI-powered platform that integrates structured lead capture flows alongside the conversational AI layer. The AI handles open-ended questions, answers from the knowledge base, and maintains conversation context. When a conversation reaches a point where the business wants to collect specific contact information or qualify a prospect, a structured lead capture flow is triggered. The business gets the flexibility of AI for support and the predictability of structured flows for conversion processes.

Comparison: Rule-Based vs. AI-Powered vs. Hybrid

| Dimension | Rule-Based | AI-Powered | Hybrid |

|---|---|---|---|

| Setup time | Fast for simple flows; slow for complex ones | Moderate (knowledge base required) | Moderate to high |

| Response accuracy | High for anticipated inputs; fails on unanticipated ones | High across varied inputs | High across full scope |

| Maintenance burden | High and grows with product/policy complexity | Low (update knowledge base) | Moderate |

| Cost per interaction | Very low | Low to moderate | Low to moderate |

| Flexibility | Low | Very high | High |

| Handles complex questions | No | Yes | Yes |

| Predictability | Complete | Moderate | Moderate to high (depends on design) |

| Best use case | Structured flows, narrow FAQ, lead forms | Broad support, open-ended Q&A, knowledge-heavy interactions | Full-service deployment with both structured and conversational needs |

| Example platforms | ManyChat, Chatfuel, early IVR | Paperchat, Intercom Fin, Drift AI | Most modern enterprise platforms |

When to Choose Each Approach

The right architecture depends on what your chatbot needs to accomplish.

Choose rule-based when:

- You need to collect structured information in a specific sequence (booking, lead qualification, ticket routing)

- Every possible input can be anticipated and the list is short

- Exact response wording is required by compliance or legal standards

- You are building a very narrow, single-purpose tool (e.g., "schedule a demo" only)

- Cost is the primary constraint and the use case is extremely simple

Choose AI-powered when:

- Your customers ask varied, open-ended questions about your products, policies, or services

- Your knowledge base is distributed across multiple documents and pages

- You need the chatbot to work correctly even when customers phrase questions unpredictably

- You want to reduce human support volume meaningfully, not just handle a small set of FAQ responses

- Your content changes regularly and you need the chatbot to stay current without manual rule updates

Choose hybrid when:

- You need both free-form conversation handling and structured data collection

- You want AI for support and rule-based logic for qualification or booking flows

- You are deploying a full-service chat experience rather than a single-function tool

- You are managing significant conversation volume across both support and sales use cases

The direction of travel is clear. Between 2020 and 2025, the share of new chatbot deployments using AI-powered architectures grew from 18% to 74% (Gartner, 2025). Rule-based systems remain in production in their existing deployments, but new deployments are overwhelmingly AI-powered or hybrid. The economics and capability of AI have made the rule-based approach the legacy option for most business use cases.

Measuring the Right Metrics for Each Type

The metrics that matter differ by architecture.

For rule-based systems, the key performance indicators are flow completion rate (how often users complete the intended path without dropping off), fallback rate (how often the system cannot match an input to a rule), and escalation rate (how often users abandon the bot for a human agent before the flow completes).

For AI-powered systems, the key metrics are first-contact resolution rate (the percentage of conversations fully resolved without human involvement), answer accuracy rate (measured through periodic sampling and the 20-question benchmark test), CSAT (post-interaction satisfaction scores), and confidence score distribution (the spread of the model's own confidence assessments across conversations).

For hybrid systems, you need both sets of metrics, applied to the relevant portions of the conversation portfolio.

Businesses that measure these metrics from day one, and use them to guide knowledge base updates and configuration changes, consistently outperform those that deploy and do not revisit performance data. The difference between a chatbot that deflects 30% of support volume and one that deflects 75% is almost entirely explained by the quality of ongoing operational attention, not by the initial setup.

The Real Question for Business Owners

The decision between rule-based and AI-powered is not primarily a technical decision. It is a business decision about what you want the chatbot to do and how much of your support and sales conversation volume you want it to handle competently.

A rule-based bot can handle the short list of questions you built flows for. It will fail on everything else, and in a growing business, "everything else" becomes the majority of actual conversation volume.

An AI-powered bot can handle the long tail of questions your customers actually ask, in the language they actually use, drawing from the full depth of your business content. The investment is in building and maintaining a good knowledge base, which is not a technical task. It is a content task, and it is the same work you should be doing to keep your website, your documentation, and your support materials current.

The chatbot category that creates genuine business value at scale is the one built on language understanding and knowledge retrieval, not the one built on branching logic and keyword triggers. The supporting data, across deflection rates, CSAT scores, and support volume reduction, points consistently in the same direction.

More Articles

How to Train an AI Chatbot on Your Own Business Data

Learn how to feed your website, documents, and FAQs into Paperchat so your AI chatbot answers like an expert on your business.

March 29, 2026

7 Mistakes to Avoid When Training Your AI Chatbot

The difference between a chatbot that builds trust and one that frustrates customers often comes down to training quality. Here are the seven most common mistakes, and how to fix them.

April 12, 2026

How to Sync Your Website Content with Your AI Chatbot Automatically

Keep your Paperchat knowledge base up to date with your website without manual updates — using scheduled crawls, webhooks, and CMS integrations.

March 29, 2026