How One E-Commerce Store Cut Support Emails by 60% with an AI Chatbot

A detailed case study showing how a mid-size home goods retailer reduced weekly support volume by 61%, improved CSAT from 3.8 to 4.6, and recovered cart abandonment - all within 90 days of AI chatbot deployment.

Note: "Brightfield Home" is a composite case study representing outcomes seen across multiple mid-size e-commerce deployments. Revenue figures, team sizes, and metrics are representative of real implementations at businesses with similar profiles. The patterns, failure modes, and results described here are drawn from documented deployments.

Every support team at a growing e-commerce operation knows the feeling. A manageable queue on Monday morning becomes a crisis by Wednesday during a sale. Q4 arrives and the team that handled 450 tickets last week is suddenly staring at 800. The same eight questions - where is my order, what is the return policy, do you ship to [country], can I change my address - arrive in an unbroken stream, each one answered by a human who spent 3 to 5 minutes on a response that, structurally, could have been automated.

The business case for AI-powered support in e-commerce is not complicated. The implementation, when done carefully, is not particularly difficult either. The hard part is the organizational transition: understanding what genuinely needs human judgment and what has been consuming human time because no alternative existed.

This case study documents that transition at Brightfield Home, a mid-size home goods retailer representative of hundreds of similar e-commerce operations that have made this shift in the past 18 months. The outcomes are specific. The approach is replicable.

The Business Before Deployment

Brightfield Home sells mid-range home goods - furniture accessories, textiles, kitchen items, decorative objects - primarily through its own DTC website. Annual revenue of approximately $2M. Monthly order volume around 1,500, with significant seasonality: Q4 and major sale events (Labor Day, Black Friday, January clearance) produce two to three times the normal order volume.

The support function was staffed by three full-time agents handling all customer contact across email, a live chat widget, and occasional phone inquiries. Weekly contact volume during normal periods ran between 420 and 470 items. During peak periods, that number climbed to 750 to 850.

Average first response time sat at 6 to 8 hours for email - acceptable by the standards of a few years ago, but increasingly out of step with customer expectations. The chat widget was staffed only during business hours (9am to 6pm weekdays), meaning roughly 40% of chat attempts during evenings and weekends went unanswered.

The 8 Questions That Dominated Support Volume

An internal audit of six weeks of support contacts found that 65% of all incoming volume fell into just eight question categories:

- Order status / tracking information

- Return eligibility and return process

- Shipping timelines and costs

- International shipping availability

- Product dimensions, materials, and care instructions

- Discount code application issues

- Address change requests post-order

- Stock availability for out-of-stock items

These eight categories did not require judgment. They required accurate, current information that was already documented somewhere in the business - on the website, in the shipping policy, in product descriptions. A human was required only because there was no alternative mechanism for delivering that information to the customer at the moment they needed it.

The Financial Picture

Three support agents at market rate for the business's region: approximately $45,000 per agent per year, all-in. Total support function cost: $135,000 annually.

That cost was carrying volume that was partially automated in nature. The team was spending approximately 65% of their productive time on questions with known answers. The remaining 35% - genuine complaints, complex order disputes, damaged goods claims, high-value customer relationships - was the work that actually required human attention and judgment.

Adding a fourth agent was under active consideration ahead of Q4. The incremental cost: $45,000. The operational reality: it would address peak volume, not the underlying inefficiency. After Q4, the team would be overstaffed for normal periods.

The Breaking Points

Three specific pain points pushed the business toward an AI solution:

Cart abandonment feedback. An exit survey deployed on the checkout page found that 23% of abandoning customers cited "couldn't get an answer quickly enough" as a reason they did not complete the purchase. In the context of a $2M business with an estimated 4-7% conversion rate, each percentage point of cart abandonment recovery represented meaningful revenue.

Customer satisfaction erosion. CSAT scores measured through post-purchase email surveys were sitting at 3.8 out of 5 - not a crisis, but declining over the previous two quarters, with slow response times cited most frequently in open-ended comments.

Team burnout during peaks. The three-person team was experienced and effective, but Q4 required extended hours, temporary contractor support that took weeks to onboard, and a recovery period in January where morale was measurably lower. The pattern was not sustainable.

The Solution: AI Chatbot Implementation

Platform and Approach

The team deployed an AI chatbot on the website with training grounded in business-specific content. Implementation was handled internally, without a dedicated technical resource - the operations director and one support agent managed the setup over approximately four working days.

The chatbot was built on Paperchat, configured with the following training sources:

- Full product catalog with dimensions, materials, care instructions, and compatibility notes

- Shipping policy (domestic rates, international availability, transit times by region)

- Return policy (eligibility window, conditions, exceptions, step-by-step process)

- Size and material guides for the furniture textile categories

- Comprehensive FAQ (synthesized from the most common support questions)

- Post-purchase instruction documents

All content was provided as a combination of uploaded documents and website URL syncing, which kept the chatbot's knowledge base current with policy updates without requiring manual re-uploads.

Configuration Choices

Several configuration decisions proved significant in the outcome:

Proactive trigger on the checkout and cart pages. When a customer spent more than 45 seconds on the cart page without progressing, the chatbot proactively offered assistance. This directly addressed the cart abandonment feedback from exit surveys.

Product page triggers for high-consideration items. On furniture and higher-priced items, a proactive message appeared after 60 seconds offering to answer questions about dimensions, materials, or shipping.

Human handover for specific categories. Complaints about damaged goods, order disputes over $200, requests for refund exceptions, and explicit requests for a human agent were all configured to trigger immediate escalation with full context transfer to the support team queue.

After-hours expectation setting. When escalation was needed outside business hours (6pm to 9am weekdays, all weekend), the chatbot confirmed that a human agent would follow up by the next business morning and sent an email confirmation with a reference number.

Implementation Timeline

| Week | Activity |

|---|---|

| Week 1, Days 1-2 | Platform signup, training content preparation and upload, initial knowledge base review |

| Week 1, Days 3-4 | Configuration of triggers, handover rules, tone settings; internal QA testing with 30 representative questions |

| Week 1, Day 5 | Soft launch on two product category pages; team monitoring responses in real time |

| Week 2 | Full site deployment; daily conversation review by support agent; knowledge base gaps identified and filled |

| Week 3-4 | Tuning phase - response length adjustments, trigger timing refinement, additional FAQ content added |

| Weeks 5-12 | Normal operation; weekly conversation sample review; knowledge base updates as products and policies change |

The Results: First 90 Days

Volume and Deflection

The headline metric was support email volume. Before deployment, the team handled an average of 450 support contacts per week (email and chat combined). By week 12 post-deployment, that figure had dropped to 176 contacts per week - a reduction of 61%.

The AI chatbot handled 73% of all chat interactions without requiring human involvement. The conversations it could not resolve were escalated with full context, meaning human agents were entering interactions with background already established rather than starting from scratch.

Weekly Support Volume Before and After AI Chatbot Deployment

16-week timeline showing inbound support contacts (emails + chats) at Brightfield Home (composite case study)

Composite case study. Results representative of mid-size e-commerce deployments. AI chatbot deployed at Week 4.

The volume reduction was not uniform across question categories. The eight high-volume question types that had previously consumed 65% of support time saw near-complete deflection - those conversations were handled by the chatbot in the vast majority of cases. Human contact volume shifted substantially toward the remaining 35%: genuine complaints, complex disputes, and high-value relationship management.

Response Time

Average first response time dropped from 6 to 8 hours to under 3 minutes. For customers contacting outside business hours - previously unserved - response time went from "no response until the next business day" to "immediate response with clear expectation-setting for any follow-up needed."

This change was most visible in the evening and weekend interaction data. Pre-deployment, 0% of after-hours inquiries received any response before the following business day. Post-deployment, 100% received an immediate AI response, with 73% fully resolved and 27% escalated with a next-business-morning follow-up commitment.

Cart Abandonment Recovery

The cart page proactive trigger produced the highest single-unit ROI of any configuration decision. Cart abandonment rate improved from 74% to 61% - a 13-percentage-point improvement attributable primarily to customers who previously could not get a quick answer to a blocking question.

At an average order value of approximately $135 and 1,500 monthly orders, a 13-point abandonment improvement represented an estimated $26,000 in additional monthly revenue from previously lost intent - revenue that required no additional marketing spend to generate.

Customer Satisfaction

Post-purchase CSAT scores improved from 3.8/5 to 4.6/5 over the 90-day period. The improvement tracked closely with the reduction in response time. Open-ended survey comments shifted away from complaints about slow responses toward positive mentions of the chatbot experience itself - customers noted that getting an immediate answer at 11pm felt "surprisingly good" and "professional."

Team Utilization

The operational shift in how the support team spent its time was significant. Before deployment, the team allocated approximately:

- 65% on routine questions (the 8 high-volume categories)

- 20% on moderate-complexity issues

- 15% on complex disputes, complaints, high-value relationships

Post-deployment, the allocation shifted to:

- 15% on routine questions (overflow and edge cases)

- 35% on moderate-complexity issues

- 50% on complex disputes, complaints, high-value relationships

The team was not smaller. But it was substantially more productive on the work that genuinely required human judgment. The fourth headcount hire was taken off the table. The existing team entered Q4 with AI handling the volume surge in routine questions - peak volume for the AI chatbot was handled without any staffing changes.

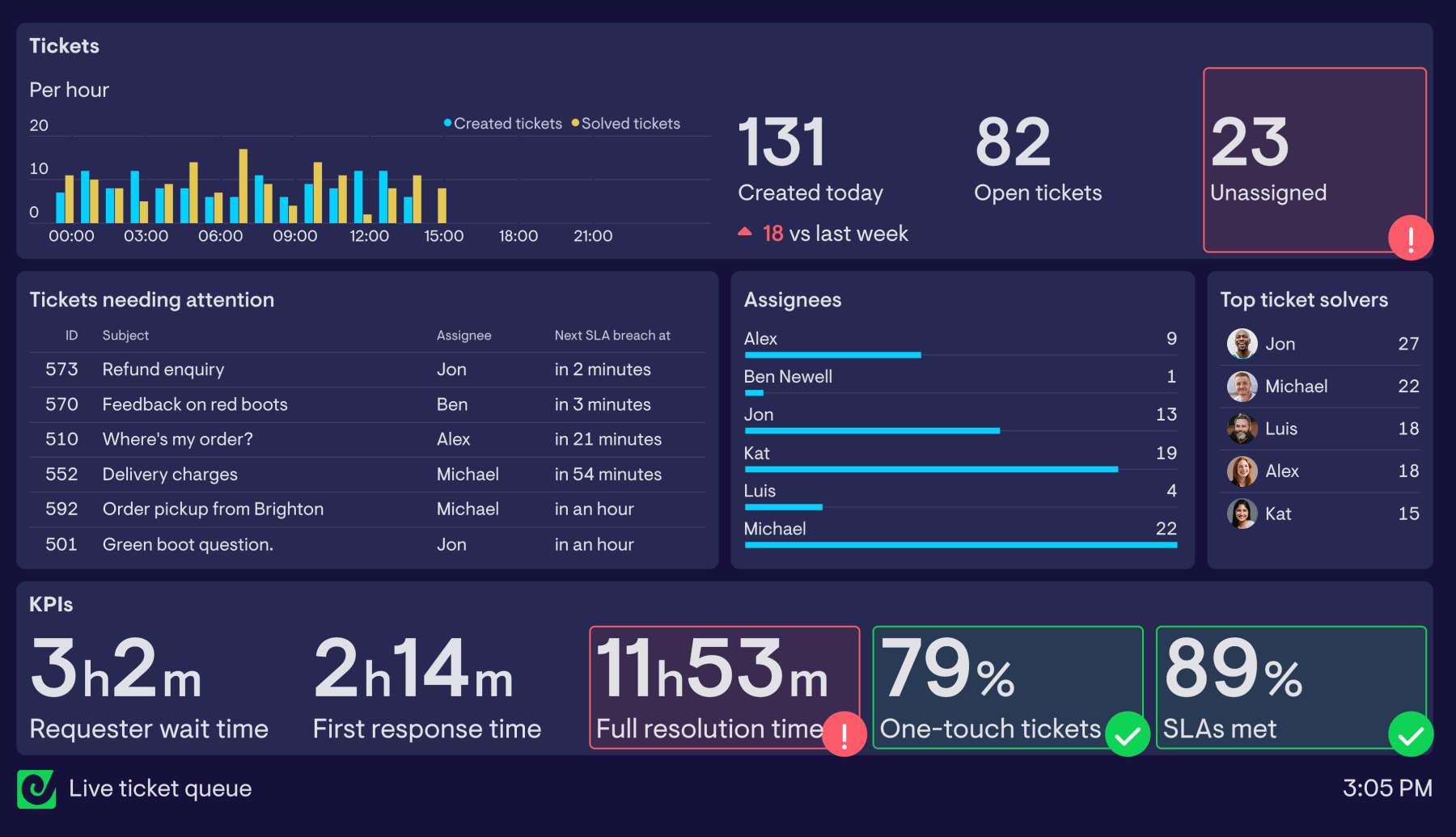

Full Before/After Summary

| Metric | Before AI Chatbot | After 90 Days | Change |

|---|---|---|---|

| Weekly support contacts | ~450 | ~176 | -61% |

| AI deflection rate | 0% | 73% | +73 pts |

| Average first response time | 6-8 hours | < 3 minutes | -99% |

| After-hours response rate | 0% | 100% | +100 pts |

| Cart abandonment rate | 74% | 61% | -13 pts |

| Customer satisfaction (CSAT) | 3.8 / 5 | 4.6 / 5 | +0.8 |

| % of team time on complex issues | 15% | 50% | +35 pts |

| Additional headcount hired | Under consideration | Not needed | $45k saved |

What Changed Operationally

The 8 Questions Are Now Fully Automated

The question categories that previously consumed 65% of support time are now handled entirely by the chatbot in the vast majority of cases. Order status inquiries trigger a response that walks the customer through the tracking process and links to their specific order if they are logged in. Return policy questions receive the full policy with the step-by-step process. Shipping timeline questions get accurate estimates based on the destination and current product availability.

These interactions do not reach the support queue. They close in the chatbot. The human team's first awareness of them is in the analytics dashboard, not in their inbox.

Human Agents Work on Higher-Value Problems

The shift in team utilization described above is not just an efficiency metric - it is a qualitative change in what the job looks like. Support agents who spent most of their previous year answering "where is my order" are now handling the cases that genuinely require empathy, negotiation, and judgment: a customer who received damaged furniture and is upset, a high-value wholesale buyer with a complex order dispute, a loyalty customer about to churn who needs a meaningful reason to stay.

The work is more demanding and more meaningful. Retention among support staff improved noticeably in the six months following deployment.

Peak Season Management Changed

The previous Q4 experience - emergency contractor hiring, extended hours, post-peak burnout - did not repeat. The AI chatbot handled the surge in routine contact volume without any staffing changes. Human agents experienced a busier-than-normal Q4, but the increase in complex cases (higher order volumes do produce more disputes and complaints) was within normal team capacity.

Post-Q4 morale and retention data were substantially better than the previous year.

Key Lessons From This Deployment

1. Training Quality Was the Critical Variable

The initial deployment did not perform at the levels described above. The first version of the chatbot, trained on an early draft of the knowledge base, achieved approximately 68% accuracy on a 30-question test battery - acceptable but not excellent. Several product care questions were answered incorrectly because the source documentation was unclear. Return policy edge cases were handled with generic responses because the policy documentation did not address them explicitly.

A two-week knowledge base improvement sprint - adding specificity to product documentation, clarifying return policy edge cases, expanding the shipping FAQ - raised accuracy to 89% on the same test battery. The operational results followed the accuracy improvement directly. Volume reduction accelerated in weeks 5 through 8 as the improved training took effect.

The lesson is direct: the AI's output quality is a function of documentation quality. The platform is not the variable. The content is.

2. The Cart Page Trigger Was the Highest-ROI Configuration

Of all the configuration decisions made during implementation, the proactive trigger on the cart and checkout pages produced the most measurable business impact. The cart abandonment improvement - 13 percentage points, representing roughly $26,000 in monthly recovered revenue - was attributable almost entirely to this single trigger.

The mechanism is straightforward: customers in high-intent states encounter a question they cannot answer immediately. The chatbot answers it in under 3 seconds. The purchase completes. Without the trigger, those customers close the tab.

This outcome generalizes to any e-commerce deployment. The checkout page is the highest-value page on the site. A proactive chatbot trigger there, configured with accurate answers to the blocking questions most commonly asked at checkout, is almost always the first optimization worth making.

3. Human Handover for Complaints Was Non-Negotiable

The decision to configure immediate human escalation for damaged goods complaints, refund exception requests, and explicit expressions of significant frustration was validated early. The first attempt to let the AI handle a damaged furniture complaint - where the customer had received a visibly broken item - produced a technically accurate response about the returns process that the customer experienced as dismissive and cold.

"We immediately added damaged goods and strong complaint language to the escalation triggers," said the operations director. "The chatbot is excellent at answering factual questions quickly. But when a customer has a genuinely bad experience, what they need first is to feel heard by a human. The chatbot cannot do that reliably, and trying to make it do so creates more damage than just routing the conversation to a person. Our team handles those conversations now, and we have seen almost no complaints about the AI - because the AI is not trying to handle the conversations it should not be handling."

The principle that emerges is: AI should handle the interactions that are primarily information retrieval, and humans should handle the interactions that are primarily emotional resolution. The configuration work is defining the boundary clearly and making the routing reliable.

The ROI Calculation

The financial case for this deployment, over the first 90 days and annualized:

Direct costs avoided:

- Fourth headcount hire deferred: $45,000 annually

- Reduction in Q4 contractor spend: ~$8,000

- Reduction in overtime during peak periods: ~$6,000

Revenue attributed to AI chat:

- Cart abandonment recovery (estimated): ~$78,000 annualized based on 90-day data

- Improved CSAT contribution to repeat purchase rate: estimated 8-12% increase in returning customer revenue (not fully isolated but tracked directionally)

Platform cost:

- AI chatbot platform subscription: approximately $600/year at the volume operated

Net impact year one: Estimated $120,000 to $140,000 in combined cost avoidance and revenue improvement against a platform cost of under $1,000. The investment paid back within the first month.

The ROI case at this scale - a $2M revenue e-commerce business with a modest support operation - is not marginal. It is substantial enough that the relevant question is not whether to deploy, but why the deployment was not made earlier.

Replicating These Results

The outcomes at Brightfield Home are representative of well-executed deployments at similar businesses. The factors that drove the results are known and replicable:

- A knowledge base built on specific, accurate, current documentation

- Proactive triggers on high-intent pages (cart, checkout, high-consideration product pages)

- Clear escalation rules that route emotional and complex interactions to humans

- A post-launch tuning period of 3 to 4 weeks where conversation review drives knowledge base improvements

- Monthly conversation reviews that surface new gaps and keep the knowledge base current

The businesses that see similar results are not those with the most sophisticated technical setups. They are those that invested the most care in building and maintaining the knowledge base the AI is trained on. The technology works. The content makes it work well.

More Articles

8 Ways AI Chatbots Reduce Support Ticket Volume

A detailed breakdown of how AI chatbots cut inbound support ticket volume, with current performance benchmarks, real case studies, and practical guidance on implementation.

April 8, 2026

10 Customer Support Tasks You Can Automate with an AI Chatbot Today

A detailed breakdown of the customer support tasks AI chatbots handle best — with data, real examples, and guidance on where human agents still matter.

April 8, 2026

7 Signs Your Business Is Ready for an AI Customer Support Agent

How to tell if your business has crossed the threshold where an AI chatbot will generate real ROI - and what signals indicate it's time to act.

April 8, 2026